By Jessiah Hulle[1]

_____

“Success in creating AI could be the biggest event in the history of our civilization. . . . [But a]longside the benefits, AI will also bring dangers.” – Stephen Hawking (2016)[2]

Two years ago, various news outlets reported that Amazon uses artificial intelligence (“AI”) “not only to manage workers in its warehouses but [also] to oversee contract drivers, independent delivery companies and even the performance of its office workers.” The AI is a cold but efficient human resources manager, comparing employees against strict metrics and terminating all underperformers. “People familiar with the strategy say . . . Jeff Bezos believes machines make decisions more quickly and accurately than people, reducing costs and giving Amazon a competitive advantage.”[3]

This practice is no longer unusual. In fact, AI-assisted human resources (“HR”) work is now commonplace. Recently, over 70% of human resources leaders surveyed by Eightfold AI confirmed that they use AI for HR functions such as recruiting, hiring, and performance management. In that same survey, over 90% of HR leaders stated an intent to increase future AI use, with 41% indicating a desire to use AI in the future for recruitment and hiring.[4] Already, “three in four organizations boosted their purchase of talent acquisition technology” in 2022 alone and “70% plan to continue investing” in 2023, regardless of a recession.[5] Research by IDC Future Work predicts that by 2024, “80% of the global 2000 organizations will use AI-enabled ‘managers’ to hire, fire, and train employees.”[6]

Skulking in the shadows of this enthusiastic adoption of AI for HR work, however, is a problem: employment discrimination. Like humans, AI can discriminate on the basis of protected classes like race, sex, and national origin. This article briefly addresses this problem, summarizes current local, state, and federal laws enacted or proposed to curtail it, and proposes two solutions for modern employers itching to implement AI-assisted employee management tools but dreading employment litigation.

AI-assisted discrimination

“Machine learning is like money laundering for bias.” – Maciej Cegłowski[7]

Employers can use AI to assist with a host of tasks. Some niche AI-assisted tasks, such as moderating internet content[8] or providing health care services,[9] implicate legal issues and invite civil litigation. Others do not. But the AI-assisted task currently receiving heightened legal scrutiny from the government is employment decision-making, including hiring, assigning, promoting, and firing. The reason for this scrutiny is straightforward: AI can, and sometimes does, discriminate against protected classes.

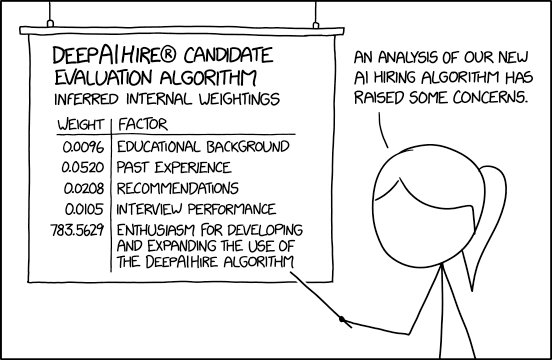

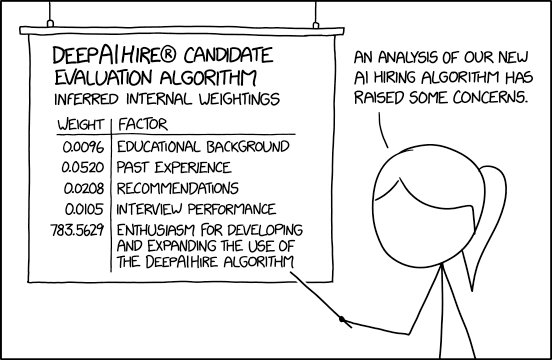

How does this happen? Put simply, the problem of AI discrimination boils down to a single maxim: garbage in, garbage out.[10] An AI that “learns” how to think from biased information (“garbage in”) will invariably produce biased results (“garbage out”). A funny example of this is Tay, a rudimentary AI chatbot designed by Microsoft that turned into a Nazi after only a day of “learning” on Twitter.[11] A serious example is predictive policing software, which can unfairly target racial minorities after “learning” about crime rates from historical over-policing patterns in minority neighborhoods.[12]

In the field of human resources, “garbage in” fed to an AI can range from historical data tainted by past discrimination (e.g. segregation-era Whites-only hiring practices) to statistics warped by employee self-selection (e.g. self-selection of male candidates into engineering). The resulting “garbage out” formulated by AI is employment discrimination under Title VII of the Civil Rights Act of 1964 (“Title VII”), the Americans with Disabilities Act (“ADA”), the Age Discrimination in Employment Act (“ADEA”), and other civil rights statutes.[13]

Amazon is a prime example (no pun intended). In 2018, the company was forced to discontinue an AI program that filtered job applicant resumes because it developed an anti-woman bias. “Employees had programmed the tool in 2014 using resumes submitted to Amazon over a 10-year period, the majority of which came from male candidates. Based on that information, the tool assumed male candidates were preferable and downgraded resumes from women.”[14]

Local and state regulation

To curb AI-assisted discrimination, at least one locality and numerous states have enacted or proposed laws regulating bias in AI employment decision-making.

New York City

New York City is the clear leader on this front. In 2021, the city enacted an ordinance that requires employers using AI for job application screening to notify job applicants about the AI and conduct an annual independent bias audit of the AI if it “substantially assist[s] or replace[s] discretionary decision making.”[15] The city began enforcement of the ordinance for hiring and promotion decisions in July 2023. “The law [only] applies to companies with workers in New York City, but labor experts expect it to influence practices nationally.”[16]

Illinois and Maryland

On the state level, Illinois enacted the Artificial Intelligence Video Interview Act in 2019 to combat AI discrimination in screening initial job applicant interview videos.[17] The statute “requires employers that use AI-enabled analytics in interview videos” to notify job applicants about the AI, explain how it works, obtain the applicant’s consent, and destroy any analytics video within thirty days upon the applicant’s request. “If the employer relies solely on AI to make a threshold determination before the candidate proceeds to an in-person interview, that employer must track the race and ethnicity of the applicants who do not proceed to an in-person interview as well as those applicants ultimately hired.”[18]

Maryland enacted a similar statute in 2020, requiring employers to obtain a job applicant’s consent before using AI-assisted facial recognition technology during interviews.[19]

It appears that the impetus behind the Illinois and Maryland laws is a belief that AI-assisted facial recognition and analysis programs discriminate against less-privileged job applicants because such programs are trained on data from past, privileged applicants. As argued by Ivan Manokha, a lecturer at the University of Oxford, companies that use these programs “are likely to hire the same types of people that they have always hired.” A possible result is “inadvertently exclud[ing] people from diverse backgrounds.”[20]

Other states

Outside New York City, Illinois, and Maryland, numerous states have also proposed laws or empaneled special committees to address AI-assisted employment discrimination. For instance, the District of Columbia,[21] California,[22] and Massachusetts[23] have all introduced bills or draft regulations in the last two years to address this issue. And various states, including Alabama, Missouri, New York, North Carolina, and Vermont, have proposed or established committees, taskforces, or commissions to review and regulate AI issues.[24]

Virginia

So far, Virginia has neither enacted nor proposed a law to specifically regulate AI-assisted employment discrimination. In January 2020, Delegate Lashrecse D. Aird introduced a Joint Resolution to “convene a working group . . . to study the proliferation and implementation of facial recognition and artificial intelligence” because “the accuracy of facial recognition is variable across gender and race,”[25] but it was tabled by a House of Delegates subcommittee.[26]

Nevertheless, it is possible that AI programs can still violate antidiscrimination laws in the state. Virginia antidiscrimination law — which protects traits ranging from racial and ethnic identity[27] to lactation,[28] protective hair braids,[29] and (for public employees) smoking[30] — presents a veritable minefield of legal issues for an AI program to traverse in screening job applicants and employees. For instance,

Virginia . . . recently passed a law that protects employees who use cannabis oil for medical purposes. This law distinguishes “cannabis oil” from other types of medicinal marijuana and has specific definitions of what is and is not protected. An algorithm that fails to take these nuances into consideration might inadvertently discriminate against protected cannabis users.[31]

Federal guidance

The federal government has also issued guidance condemning AI-assisted employment discrimination.

EEOC

Although the Equal Employment Opportunity Commission (“EEOC”) has yet to issue a formal rule on AI-assisted employment discrimination, it has clearly condemned the practice through various informal guidance documents, a draft enforcement plan, and at least one civil lawsuit.

First, in May 2022 the EEOC issued a question-and-answer-style informal guidance document explaining that an employer’s use of an AI program that “relies on algorithmic decision-making may violate existing requirements under [the ADA].”[32]

The EEOC explained that, most commonly, employers violate the ADA when they fail to provide a reasonable accommodation “necessary for a job applicant or employee to be rated fairly and accurately by [an AI program]” or rely on “an [AI] that intentionally or unintentionally ‘screens out’ an individual with a disability.” A “screen out” occurs when a “disability prevents a job applicant or employee from meeting — or lowers their performance on — a selection criterion, and the applicant or employee loses a job opportunity as a result.”

The EEOC provided multiple examples of AI-assisted screen-outs that possibly violate the ADA. In one example, an AI chatbot designed to engage in text communications with a job applicant may violate the ADA by screening-out applicants who indicate “significant gaps in their employment history” because of a disability. In another example, an AI-assisted video interviewing software that analyzes job applicant speech patterns may violate the ADA by screening-out applicants who have speech impediments. In a third example, an AI-analyzed pre-employment personality test “designed to look for candidates . . . similar to the employer’s most successful employees” may violate the ADA by screening-out job applicants with PTSD who struggle to ignore distractions but can thrive in a workplace with “reasonable accommodations such as a quiet workstation or . . . noise-cancelling headphones.” All of these examples follow the same theme: AI programs can reject job applicants based on external data without considering reasonable accommodations.

The EEOC warned that employers remain liable under the ADA even if an AI program is administered by a vendor.[33]

Second, in May 2022 the EEOC sued an international tutoring company for ADEA discrimination resulting from an AI-assisted automated job applicant screening program. According to the complaint, the company’s online tutoring application solicited birthdates of job applicants but automatically rejected female applicants aged 55 or older and male applicants aged 60 or older. The company filed an amended answer denying the allegations in March 2023. The case is currently pending.[34]

Third, in January 2023 the EEOC announced in its Draft Strategic Enforcement Plan for fiscal years 2023 to 2027 that it was committed to “address[ing] systematic discrimination in employment.” The plan specifically announced the following subject matter priority for the agency:

The EEOC will focus on recruitment and hiring practices and policies that discriminate against racial, ethnic, and religious groups, older workers, women, pregnant workers and those with pregnancy-related medical conditions, LGBTQI+ individuals, and people with disabilities. These include: the use of automated systems, including artificial intelligence or machine learning, to target job advertisements, recruit applicants, or make or assist in hiring decisions where such systems intentionally exclude or adversely impact protected groups.[35]

In accordance with this strategic plan, the EEOC “launched an agency-wide initiative to ensure that the use of software, including artificial intelligence (AI), machine learning, and other emerging technologies used in hiring and other employment decisions comply with the federal civil rights laws that the EEOC enforces.”[36] The EEOC also held a four-hour public hearing on “Navigating Employment Discrimination in AI and Automated Systems,” which is currently hosted on its website[37] and YouTube.[38]

Fourth, the EEOC joined a Joint Statement with the Consumer Financial Protection Bureau, Department of Justice Civil Rights Division, and Federal Trade Commission promising to “monitor the development and use of automated systems,” “promote responsible innovation” in the field of AI, and “vigorously . . . protect individuals’ rights regardless of whether legal violations occur through traditional means or advanced technologies.”[39]

Finally, in April 2023 the EEOC published a second technical guidance document explaining that AI-assisted employment decision-making programs can violate Title VII.

The EEOC noted that modern employers use a variety of algorithmic and AI-assisted programs for human resources work, including scanning resumes, prioritizing job applications based on keywords, monitoring employee productivity, screening job applicants with chatbots, evaluating job applicant facial expressions and speech patterns with video interview programs, and testing job applicants on personality, cognitive ability, and perceived “cultural fit” with games and tests. However, under this new EEOC guidance document, all algorithmic and AI-assisted programs “used to make or inform decisions about whether to hire, promote, terminate, or take similar actions toward applicants or current employees” fall within the ambit of the agency’s Guidelines on Employee Selection Procedures under Title VII in 29 C.F.R. Part 1607. In other words, if an AI program discriminates against a job applicant or employee in violation of Title VII, the EEOC evaluates the violation the same as if it was committed by a person.[40]

Again, the EEOC warned that employers remain liable under Title VII even if a discriminatory AI program is administered by a vendor.

It is expected that, in accordance with its four-year strategic plan and Joint Resolution, the EEOC will issue further guidance on this issue in the next few years.

The White House

In 2022 the White House Office of Science and Technology Policy published a Blueprint for an AI Bill of Rights. The Blueprint reaffirmed that “[a]lgorithms used in hiring . . . decisions have been found to reflect and reproduce existing unwanted inequities or embed new harmful bias and discrimination” and suggested five principles to “guide the design, use, and deployment of automated systems to protect the American public.” The second principle proposed the following right: “You should not face discrimination by algorithms and systems should be used and designed in an equitable way.” According to the White House,

This protection should include proactive equity assessments as part of the system design, use of representative data and protection against proxies for demographic features, ensuring accessibility for people with disabilities in design and development, pre-deployment and ongoing disparity testing and mitigation, and clear organizational oversight. Independent evaluation and plain language reporting in the form of an algorithmic impact assessment, including disparity testing results and mitigation information, should be performed and made public whenever possible to confirm these protections.[41]

Although this Blueprint is currently all bark, it portends the future bite of enhanced enforcement by the Biden Administration against AI-assisted discrimination.

Congress

So far, Congress has proposed bills to regulate AI generally but not to regulate AI-assisted employment discrimination specifically.[42] However, this does not mean that Congress is unaware of the issue. For instance, in March 2023, Alexandra Reeve Givens, the President and CEO of the Center for Democracy & Technology, testified before the U.S. Senate Committee on Homeland Security and Government Affairs that “if the data used to train the AI system is not representative of wider society or reflects historical patterns of discrimination, it can reinforce existing bias and lack of representation in the workplace.”[43]

Takeaways

In sum, as more employers use unregulated AI to assist with human resources tasks, the potential for inadvertent, disparate impact, and even intentional discrimination increases. New York City, Illinois, and Maryland have already enacted laws directly regulating AI-assisted recruiting. Other states have proposed similar, or even stricter, laws. Accordingly, employers must tread this area of AI usage carefully.

Perhaps the best takeaway for employers is a quote ostensibly taken from a 1979 presentation at IBM: “A computer can never be held accountable[.] Therefore a computer must never make a management decision.”[44] Good HR staff know antidiscrimination laws inside and out. In this current wild west of AI regulation, employers should rely on well-versed HR staff to review AI work, just like employers rely on employees to review intern work. Moreover, employers should require a human to make final hiring, assigning, promoting, and firing decisions. In fact, requiring a human decision is a loophole in the New York City ordinance, which only requires a bias audit for AI programs that “substantially assist or replace discretionary decision making.”[45] Additionally, employers should stay abreast of new regulatory guidance on AI from the EEOC as it releases.

The second-best takeaway for employers is the old joke: “The early bird gets the worm, but the second mouse gets the cheese.”[46] Many employers want to be an early bird in implementing new AI programs to boost HR functions. This desire is understandable. It seems like everyone else is already onboard the AI train. In 2017, the Harvard Business Review published an article claiming that “[t]he most important general-purpose technology of our era is artificial intelligence.”[47] Now, in 2023, close to 75% of surveyed HR leaders report using AI for human resources tasks. And that percentage only increases as companies scramble to get the worm. As Jensen Huang, the co-founder and CEO of trillion-dollar-valued Nvidia, recently predicted in a speech, “[a]gile companies will take advantage of AI and boost their position. Companies less so will perish.”[48] However, employers — especially small businesses — should also consider the benefits of being the second mouse. Everyone, from Fortune 100 corporations to local, state, and federal governments, is currently testing the scope of liability for AI-assisted discrimination.[49] This beta testing phase exposes employers to high potential risk and cost.[50] Therefore, although not the “coolest” approach, it behooves many employers to simply wait until this issue is either litigated and regulated or solved by the invention of a relatively bias-proofed human resources AI.

[1] Jessiah Hulle is a litigation and investigations associate at Gentry Locke in Roanoke, Virginia. He graduated from the University of Valley Forge in 2017 and Washington and Lee University School of Law in 2020

[2] Dom Galeon, Hawking: Creating AI Could Be the Biggest Event in the History of Our Civilization, Futurism (Oct. 10, 2016), https://archive.is/M7DDD.

[3] Spencer Soper, Fired by Bot at Amazon: ‘It’s You Against the Machine’, Yahoo Finance (June 28, 2021), https://archive.is/Tpc5Q.

[4] Gem Siocon, Ways AI Is Changing HR Departments, Business News Daily (June 22, 2023), https://archive.is/Nf7rg.

[5] Lucas Mearian, Legislation to Rein in AI’s Use in Hiring Grows, Computerworld (Apr. 1, 2023), https://archive.is/Lx9xD.

[6] Lucas Mearian, The Rise of Digital Bosses: They Can Hire You – And Fire You, Computerworld (Jan. 6, 2022), https://archive.is/NuZLo.

[7] Maciej Cegłowski, The Moral Economy of Tech, Idle Words (June 26, 2016), https://archive.is/t6q6m (quoting remarks given at the SASE Conference in Berkeley).

[8] See, e.g., Force v. Facebook, Inc., 934 F.3d 53, 60 (2d Cir. 2019) (rejecting claim that Facebook’s AI-enhanced algorithm negligently propagated terrorism).

[9] See, e.g., Sharona Hoffman & Andy Podgurski, Artificial Intelligence and Discrimination in Health Care, 19 Yale J. Health Pol’y L. & Ethics 1 (2020) (arguing that AI-assisted algorithmic discrimination, especially on the basis of race, in health care should be actionable under Title VI).

[10] R. Stuart Geiger et al., “Garbage In, Garbage Out” Revisited: What Do Machine Learning Application Papers Report about Human-Labeled Training Data?, 2:3 Quantitative Sci. Stud. 795 (Nov. 5, 2021), https://archive.is/9D477 (quoting this maxim as a “classic saying in computing about how problematic input data or instructions will produce problematic outputs”).

[11] Amy Kraft, Microsoft Shuts Down AI Chatbot after It Turned into a Nazi, CBS News (Mar. 25, 2016), https://archive.is/xScSA (reporting that Tay went from stating “humans are super cool” on March 23, 2016, to “Hitler was right I hate the jews” on March 24, 2016).

[12] Will Douglas Heaven, Predictive Policing Algorithms Are Racist. They Need to Be Dismantled., MIT Tech. Rev. (July 17, 2020), https://archive.is/clURU.

[13] See generally Keith E. Sonderling et al., The Promise and the Peril: Artificial Intelligence and Employment Discrimination, 77 U. Miami L. Rev. 1 (2022).

[14] Guadalupe Gonzalez, How Amazon Accidentally Invented a Sexist Hiring Algorithm, Inc.com (Oct. 10, 2018), https://archive.is/INQrl.

[15] N.Y.C. Local Law 144; N.Y.C.R. §§ 5-300, 5-301, 5-302, 5-303, 5-304 (2021), https://archive.is/WmrAm.

[16] Steve Lohr, A Hiring Law Blazes a Path for A.I. Regulation, N.Y. Times (May 25, 2023), https://archive.is/mGYGU.

[17] 820 I.L.C.S. 42/1 et seq.

[18] Paul Daugherity et al., States Scramble to Regulate AI-Based Hiring Tools, Bloomberg Law (Apr. 10, 2023), https://archive.is/a6gZt.

[19] Md. Labor and Emp. Code § 3-717 (2020).

[20] Ivan Manokha, Facial Analysis AI Is Being Used in Job Interviews – It Will Probably Reinforce Inequality, The Conversation (Oct. 7, 2019), https://archive.is/9U2Jn.

[21] J. Edward Moreno, New York City AI Bias Law Charts New Territory for Employers, Bloomberg Law (Aug. 29, 2022), https://archive.is/QLQVD (“District of Columbia Attorney General Karl Racine introduced a bill [in 2021] that would mirror New York City’s law and would put the onus on employers to ensure AI tools they use aren’t discriminating against certain candidates.”).

[22] Id. (“The California Civil Rights Department announced [in early 2022] that it’s drafting regulations to clarify that the use of automated decision-making tools is subject to employment discrimination laws.”).

[23] Hiawatha Bray, Mass. Lawmakers Scramble to Regulate AI Amid Rising Concerns, Bos. Globe (May 18, 2023), https://archive.is/uCAHh (“[Massachusetts state senator Barry] Finegold has filed a bill that would set performance standards for powerful ‘generative’ AI systems . . . . [C]ompanies would need to make sure that AI systems aren’t used to discrimination against individuals or groups based on race, sex, gender, or other characteristics protected under antidiscrimination law.”).

[24] Report: Legislation Related to Artificial Intelligence, Nat’l Conf. of State Legs. (Aug. 26, 2022), https://archive.is/76Gjf (collecting proposed and enacted laws pre-August 2022).

[25] Va. H.J.R. No. 59 (2020 Session).

[26] HJ 59 Facial recognition and artificial intelligence technology; Joint Com. on Science & Tech to study., Va. Legis. Info. Sys. (Jan. 29, 2020), https://archive.is/fpjn6.

[27] Va. Code § 2.2-3900(B)(2).

[28] Va. Code §§ 2.2-3901, 2.2-3902.

[29] Va. Code § 2.2-3901(D).

[30] Va. Code § 2.2-2902.

[31] Amber M. Rogers & Michael Reed, Discrimination in the Age of Artificial Intelligence, ABA (Dec. 7, 2021), https://archive.is/ujiCa.

[32] Technical Guidance Document: The Americans with Disabilities Act and the Use of Software, Algorithms, and Artificial Intelligence to Assess Job Applicants and Employees, EEOC (May 15, 2022), https://archive.is/OnMM2.

[33] Id.

[34] EEOC v. iTutorGroup, Inc. et al., No. 1:22-CV-2565 (E.D.N.Y. May 5, 2022).

[35] Draft Strategic Enforcement Plan 2023-2027, 88 Fed. Reg. 1379, 1381 (Jan. 1, 2023).

[36] Artificial Intelligence and Algorithmic Fairness Initiative, EEOC (2023), https://archive.is/SBeWt (promising to “issue technical assistance to provide guidance on algorithmic fairness and the use of AI in employment decisions”).

[37] Id.

[38] Navigating Employment Discrimination in AI and Automated Systems, YouTube (Jan. 31, 2023), https://www.youtube.com/watch?v=rfMRLestj6s.

[39] Joint Statement on Enforcement Efforts Against Discrimination and Bias in Automated Systems, EEOC (2023), https://archive.is/9AybV.

[40] Select Issues: Assessing Adverse Impact in Software, Algorithms, and Artificial Intelligence Used in Employment Selection Procedures Under Title VII of the Civil Rights Act of 1964, EEOC (Apr. 2023), https://archive.is/u1s5p.

[41] Blueprint for an AI Bill of Rights, The White House (Oct. 2022), https://archive.is/OBbK8; Blueprint for an AI Bill of Rights: Making Automated Systems Work for the American People, The White House (Oct. 2022), https://archive.is/17aZb.

[42] See, e.g., U.S. Congress to Consider Two New Bills on Artificial Intelligence, Reuters (June 8, 2023), https://archive.is/7nnwl (reporting that one bill “would require the U.S. government to be transparent when using AI to interact with people” and the other “would establish an office to determine if the United States is remaining competitive in the latest technologies”).

[43] Testimony of Alexandra Reeve Givens, Artificial Intelligence: Risks and Opportunities, U.S. Senate Comm. on Homeland Security and Gov’t Affs. (Mar. 8, 2023), https://archive.is/LH3gv.

[44] See, e.g., An IBM slide from 1979, CSAIL – MIT, Facebook (Dec. 19, 2022), https://archive.is/MQF2n (sharing viral picture of quote); Gizem Karaali, Artificial Intelligence, Basic Skills, and Quantitative Literacy, 16:1 Numeracy 1, 4-5, 5 n.13 (2023) (attributing the quote to a 1979 IBM presentation).

[45] Steve Lohr, A Hiring Law Blazes a Path for A.I. Regulation, N.Y. Times (May 25, 2023), https://archive.is/mGYGU (quoting the president of the Center for Democracy & Technology criticizing this loophole as “overly sympathetic to business interests”).

[46] See, e.g., Wesley Wildman, Jokes and Stories: Wisdom Sayings, B.U.: Wesley Wildman’s Weird Wild World Wide Web Site (Jan. 1, 2000), https://archive.is/5wWhI.

[47] Erik Brynjolfsson & Andrew McAfee, The Business of Artificial Intelligence, Harv. Bus. Rev. (July 18, 2017), https://archive.is/RSvSb.

[48] Vlad Savov & Debby Wu, Nvidia CEO Says Those Without AI Expertise Will Be Left Behind, Bloomberg (May 28, 2023), https://archive.is/TLsR8.

[49] Cf., e.g., Ryan E. Long, Artificial Intelligence Liability: The Rules Are Changing, LSE Bus. Rev. Blog (Aug. 16, 2021), https://archive.is/mdcEM (discussing corporate civil liability for AI work).

[50] See generally Keith E. Sonderling et al., The Promise and the Peril: Artificial Intelligence and Employment Discrimination, 77 U. Miami L. Rev. 1 (2022).

Image Source: https://imgs.xkcd.com/comics/ai_hiring_algorithm.png