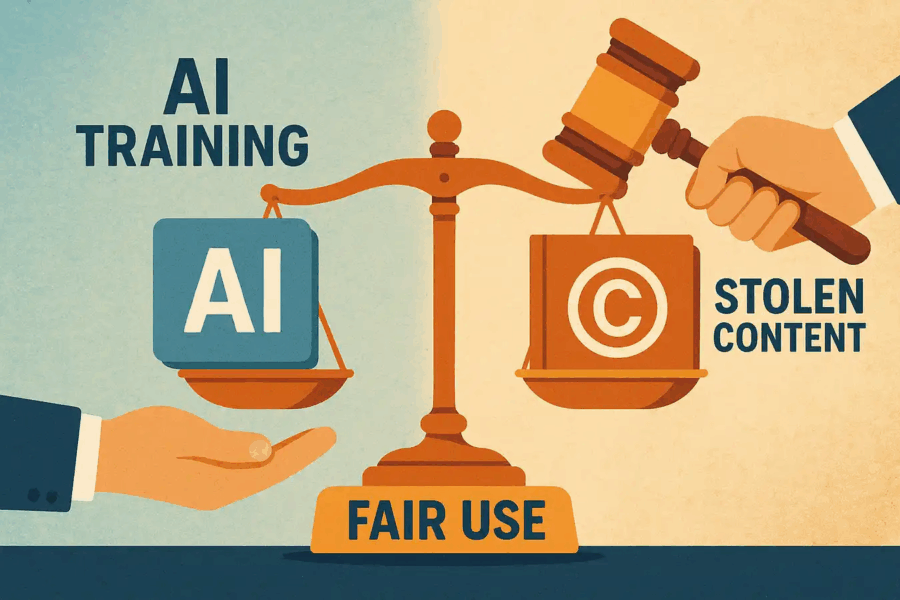

The Implications of Artificial Intelligence for Creators and the Role of the Fair Use Doctrine

By: Georgina Michelle

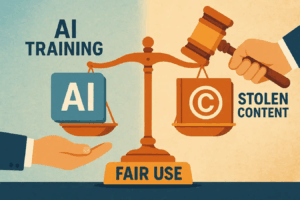

Artificial intelligence (AI) has become an increasingly integral part of life, with many benefits that ease daily tasks.[1] These developments are viewed simultaneously as a leap forward in human innovation and ingenuity by proponents, and as a stifling of creativity by opponents.[2] On one hand, the use of AI tools can lead to greater efficiency, leaving redundant tasks to be completed on autopilot, creating space and allotting time for projects that require greater effort.[3] On the other hand, the rise of AI unleashes a host of ethical and moral dilemmas, issues regarding personal privacy, and various forms of cybercrime.[4] Among these ethical issues grows the increasing concern for protecting intellectual property, with opponents calling for the prevention of content farming by AI in order to optimize its operation.[5]